Running HY-World 2.0 on Strix Halo: 3D World Reconstruction on an AMD iGPU

Porting Tencent's CUDA-only 3D world model to AMD's Radeon 8060S via ROCm Docker, flash-attention CK kernels, a fully compiled gsplat with wave32 patches, and complete 3D reconstruction output including Gaussian splats.

Tencent released HY-World 2.0 yesterday, an open-source 3D world model that takes images or video and produces real, editable 3D assets: meshes, Gaussian splats, point clouds, depth maps, camera parameters. Unlike video world models that generate pixels you can watch but never touch, HY-World 2.0 outputs things you can import into Blender, Unity, or Unreal Engine. The currently released component is WorldMirror 2.0, a ~1.2B parameter feed-forward model that does multi-view 3D reconstruction from photos or video in a single forward pass.

The catch: the entire stack is built around NVIDIA CUDA 12.4. No ROCm support, no AMD mention anywhere in the docs. I wanted to see if it could run on my Strix Halo machine anyway.

The Three Blockers

After reading through the codebase, I identified three hard blockers preventing this from running on AMD hardware:

| Blocker | What | Why It’s a Problem |

|---|---|---|

| gsplat | Gaussian splatting library | Pinned to a CUDA 12.4 prebuilt wheel. AMD’s ROCm fork only supports MI300X/MI325X datacenter GPUs. |

| flash-attention | Attention kernel library | Imported unconditionally at module level with no fallback. If neither FA3 nor FA2 imports, the model won’t even load. |

| PyTorch | CUDA-only wheels | README pins torch==2.4.0+cu124. Unusable on AMD. |

The PyTorch problem is straightforward: use the rocm/pytorch Docker image, which I’d already proven works on gfx1151 during my OmniVoice experiments. The other two required more work.

Flash-Attention: CK Backend for RDNA 3.5

The attention layer in WorldMirror 2.0 tries to import FlashAttention v3, falls back to v2, and has no outer exception handler. If both imports fail, the module crashes at load time. The actual attention computation already has a PyTorch SDPA fallback for fp32, but the import has to succeed first.

The good news: PR #2400 merged on March 26, 2026, adding RDNA 3 (gfx11) and RDNA 4 (gfx12) support to flash-attention’s Composable Kernel backend. This means flash-attention can now compile for gfx1151 from source.

The build required --no-build-isolation (the Docker build environment has no GPU, so the setup.py can’t auto-detect the arch) and explicit GPU_ARCHS=gfx1151:

export GPU_ARCHS=gfx1151

export PYTORCH_ROCM_ARCH=gfx1151

export MAX_JOBS=8

pip install --no-build-isolation -e .This compiles every CK FMHA kernel variant for gfx1151. It took 2 hours and 15 minutes to complete. Each kernel is a deeply nested C++ template instantiation compiled through hipcc, and there are dozens of variants covering different head dimensions, batch configurations, and data types. The "still running..." messages ticked by at ~60 second intervals for the entire duration.

But it worked. flash_attn-2.8.4 installed successfully, enabling bf16 inference.

gsplat: Three Bugs and a Full Build

gsplat is used for Gaussian splatting rendering, converting the model’s predicted splat attributes into rendered views. The import chain is unconditional, even if you disable the GS head at runtime, gsplat must be importable at the Python level. Getting it to compile for gfx1151 required fixing three separate bugs in AMD’s ROCm fork.

Bug 1: Missing GLM headers. PyTorch’s hipify layer converts CUDA sources to HIP by copying them into a gsplat/hip/ directory. The bundled GLM (OpenGL Mathematics) library in gsplat/cuda/csrc/third_party/glm/ gets copied too, but hipify creates symlinks with deeply nested relative paths that break GLM’s internal #include "scalar_constants.inl" directives. The fix: delete the bundled GLM entirely and install GLM headers from source to /usr/local/include/, then add that to the include path in setup.py. System-installed GLM has a clean directory structure that hipify can’t corrupt.

Bug 2: GLM CUDA version check. HIP defines __CUDACC__ (to enable CUDA compatibility mode) but doesn’t set __CUDACC_VER_MAJOR__. GLM’s platform.h sees the CUDA flag, checks the version, finds it’s 0, and emits #error "GLM requires CUDA 7.0 or higher". The fix: add -D__CUDACC_VER_MAJOR__=12 -D__CUDACC_VER_MINOR__=0 -DGLM_FORCE_PURE to both the cxx and hipcc compiler flags.

Bug 3: Hardcoded wavefront size 64. This was the most interesting one. The ROCm fork was written for MI300X (CDNA), which uses 64-wide wavefronts. RDNA 3.5 uses 32-wide wavefronts, same as NVIDIA warps. The fork hardcodes 64 everywhere: rocprim::warp_reduce<float, 64>, cg::tiled_partition<64>, threadIdx.x % 64, loop bounds iterating over warp lanes. ROCm’s rocprim library has a compile-time static assertion that catches the mismatch: you can’t use a virtual wave size of 64 on a target with 32-wide wavefronts. The fix: sed-replace all warp-size-related 64 constants with 32 across Utils.cuh and every .cu source file.

The ROCm fork’s setup.py also dynamically detects the GPU architecture via rocminfo, which fails during Docker build (no GPU access) and falls back to gfx942 (MI300X). A global sed -i 's/gfx942/gfx1151/g' handles that.

After all patches, the ROCm fork compiles in about 60 seconds:

=== gsplat (ROCm fork) build SUCCEEDED ===

gsplat import OK

=== gsplat fully functional ===Full Output, No Compromises

With both flash-attention and gsplat compiled natively, WorldMirror 2.0 produces its complete output set on Strix Halo:

| Output | Status | Format |

|---|---|---|

| Gaussian splats | Working | .ply (3DGS format, ~4 MB per scene) |

| 3D point clouds | Working | Colored .ply |

| Depth maps | Working | .npy (raw) + .png (visualization) |

| Surface normals | Working | .png (RGB visualization) |

| Camera poses & intrinsics | Working | JSON |

| COLMAP sparse reconstruction | Working | Binary (cameras.bin, images.bin, points3D.bin) |

The Gaussian splats are the crown jewel, they encode the full 3D scene as a set of ~60K gaussians (after voxel pruning from ~400K) that can be rendered from arbitrary viewpoints. You can import the .ply files into any 3DGS-compatible viewer (SuperSplat, Luma, or the growing list of Blender/Unity plugins) for real-time exploration of the reconstructed scene.

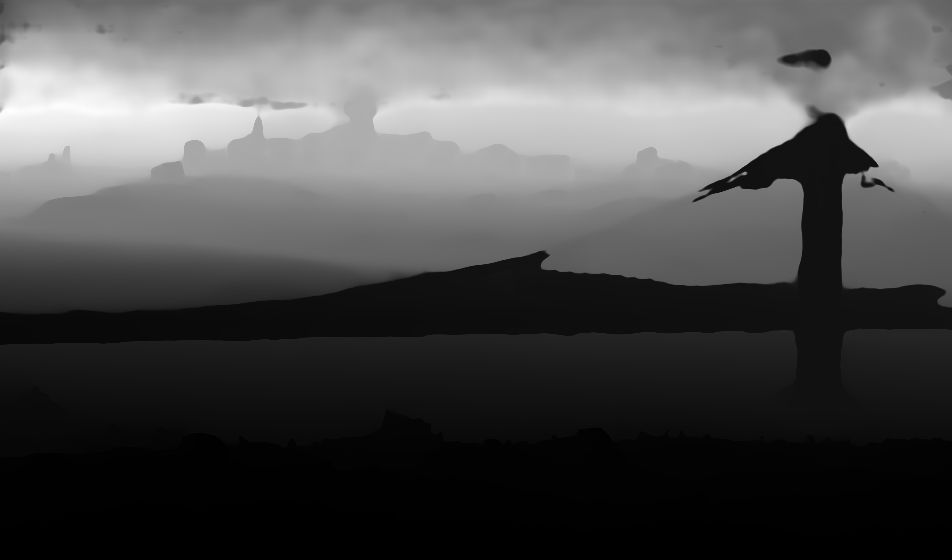

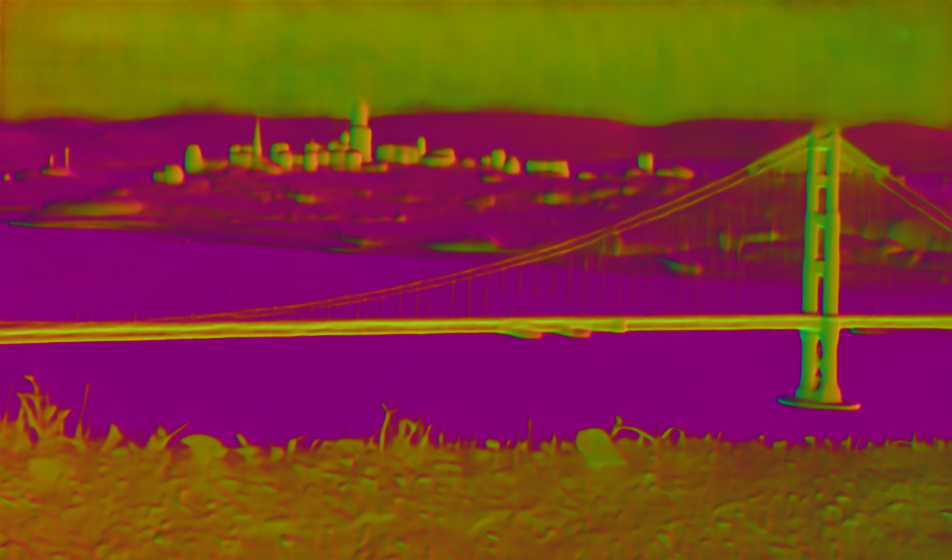

Sample Results

Here’s a test run using a photo of the Golden Gate Bridge. The model takes a single input image and produces depth, surface normals, and a full 3D Gaussian splat reconstruction:

The depth and normal maps capture the broad scene layout but with soft edges and muddy boundaries, typical of single-image monocular estimation with no stereo baseline. The splat render re-renders the scene’s ~58K gaussians from the original camera angle via gsplat rasterization. It captures the overall structure but shows semi-transparent edges and softened detail. Novel viewpoints degrade further since the model can only place splats where it has pixel data. For better results, feed multiple images of the same scene.

Inference Performance

Running on a single test image at the default resolution (952px longest edge), bf16 precision via flash-attention CK, full output with Gaussian splatting:

| Stage | Time |

|---|---|

| Model inference | 66.7s |

| Compute filter mask | 2.3s |

| Save outputs (parallel) | 0.6s |

| Total | 69.6s |

The model downloads are ~2.5 GB on first run (cached via a mounted HuggingFace cache volume). The inference time includes the full forward pass through the ~1.2B parameter ViT backbone, DPT heads, and Gaussian splatting head, running in bf16 on the Radeon 8060S via ROCm HIP. The GS head adds ~25 seconds compared to running with it disabled, as it needs to predict and process splat attributes for the full scene.

The Docker Setup

The full stack runs in a Docker container based on rocm/pytorch:latest (PyTorch 2.9.1, ROCm 7.2.1). The Dockerfile:

- Clones HY-World 2.0

- Installs Python dependencies (with the CUDA gsplat wheel stripped out)

- Builds flash-attention from source with CK RDNA3 support (~2h15m)

- Builds gsplat from AMD’s ROCm fork with GLM, CUDA-compat, and wave32 patches (~1m)

- Patches the attention import if flash-attention failed (not needed since it succeeded)

Running it:

cd ~/docker/hyworld2

./run.sh /path/to/images /path/to/outputThe run script auto-detects what built successfully and configures the flags accordingly: --enable_bf16 if flash-attention is present, full GS output if gsplat compiled natively.

For multi-view reconstruction (the primary use case), put multiple images of the same scene in the input directory. For video input, point --input_path at a video file. The model handles variable-count inputs and automatically extracts frames from video with motion-aware sampling.

What’s Next

The rest of the HY-World 2.0 pipeline (panorama generation via HY-Pano-2, trajectory planning via WorldNav, and world expansion via WorldStereo 2.0) is marked “coming soon” in the repo. When those components release, they’ll face the same CUDA-only assumptions, but the pattern established here, ROCm Docker base, source-build flash-attention, patched gsplat with wave32 fixes, should apply directly.

Practical Takeaway

HY-World 2.0’s WorldMirror 2.0 runs on Strix Halo via ROCm Docker with full CUDA parity. Flash-attention compiles cleanly via the CK backend (two hours to build). gsplat compiles from AMD’s ROCm fork after three targeted patches: system GLM headers to bypass hipify path corruption, CUDA version defines to satisfy GLM’s platform checks, and wave32 constants to match RDNA 3.5’s wavefront width. The result is complete 3D reconstruction output, depth, normals, point clouds, camera parameters, and Gaussian splats, identical to what you’d get on an NVIDIA GPU. Memory is a non-issue: the ~1.2B parameter model fits trivially in 128 GB of unified memory with room to spare.